This database glossary was created to provide clear, practical definitions of the terms commonly used across ScaleGrid content. It is designed for engineers, SREs, and technical decision-makers who already work with modern data infrastructure and want quick, reliable context without digging through documentation.

Each term focuses on how technologies behave in real-world environments—covering performance, scalability, availability, and operational tradeoffs. The goal is not just to define concepts, but to make it easier to understand when and why they matter in production systems.

Use this glossary as a reference while reading our blogs or evaluating architecture decisions, and as a quick way to connect key database, DevOps, and messaging concepts in one place.

Core Database Concepts

ACID

Atomicity, Consistency, Isolation, Durability — core properties that ensure database transactions execute reliably and maintain data integrity under concurrent production workloads.

Active-Active Architecture

A deployment topology where multiple nodes simultaneously accept reads and writes, enabling geographic distribution and maximum throughput. Requires conflict resolution strategies to handle concurrent writes to the same data.

Active-Passive Architecture

A high-availability deployment model where a primary node handles all write operations while one or more standby nodes remain ready to take over on failure. Standby nodes may serve read traffic in some configurations, but must be explicitly targeted. Upon failure, a standby node is promoted to primary to ensure continuity.

Asynchronous Replication

A replication method where the primary node commits transactions without waiting for replicas to acknowledge them. This allows the primary to continue accepting new operations even if replicas lag behind, improving write performance and availability at the cost of potential data loss or temporary inconsistency.

At-Least-Once Delivery

A messaging delivery guarantee where each message is delivered one or more times, even if that requires redelivery after a failure. Consumers must be designed to handle duplicate messages idempotently.

Automatic Failover

A cluster-triggered process where a standby node is promoted to primary without human intervention, typically initiated by heartbeat timeout or quorum loss detection. Contrasts with manual failover, which requires operator action.

Backup and Recovery

Processes used to create data copies and restore systems after failures, used to automate disaster recovery and minimize operational risk in production environments.

BASE

Basically Available, Soft state, Eventual consistency — a model used in distributed systems to prioritize availability over strict transactional consistency, accepting temporary inconsistency in exchange for improved fault tolerance and partition resilience.

Bring Your Own Cloud (BYOC)

A deployment model where infrastructure is hosted within the customer’s cloud account, while provisioning, automation, and operational management are handled by a third-party service provider.

Cache

A high-speed data layer, typically in-memory (e.g., Redis), that stores frequently accessed data to reduce latency and offload read pressure from backend systems such as databases.

Change Data Capture (CDC)

A method of capturing and emitting data changes (inserts, updates, deletes) as structured events for downstream systems, enabling integration, analytics, and event-driven architectures.

Cluster

A group of independent nodes coordinated to operate as a single system, providing capabilities such as scalability, high availability, and fault tolerance.

Column-Oriented Storage (Columnar)

A storage format where data is organized by column, enabling faster analytical queries and efficient compression for large datasets.

Connection Pooling

A technique that reuses active database connections to reduce overhead, used to improve resource efficiency and stability under high-concurrency workloads.

Consistency Model

The rules that define how and when data updates become visible across distributed nodes, influencing application correctness and latency tradeoffs.

Data Lake

A centralized repository for storing raw structured and unstructured data, designed for large-scale processing, analytics, and machine learning workloads.

Data Model

The structure that defines how data is organized, stored, and accessed, such as relational, document, or key-value models.

Data Warehouse

A system optimized for querying and analyzing large volumes of historical and aggregated data for business intelligence.

Database Management System (DBMS)

Software that enables the creation, storage, retrieval, and management of data, while providing core capabilities such as querying, security, concurrency control, and data integrity. Examples include PostgreSQL, MySQL, and MongoDB.

Database-as-a-Service (DBaaS)

A cloud delivery model where database infrastructure, operations, backups, and version management are handled by a provider, allowing teams to use databases without managing the underlying systems. ScaleGrid offers fully managed DBaaS across AWS, Azure, and Google Cloud.

Deadlock

A condition where multiple transactions block each other by holding locks on resources required by others, requiring detection and resolution mechanisms.

Denormalization

The intentional introduction of redundancy into a schema to improve read performance, often used in high-throughput or latency-sensitive workloads.

Document Store

A database model that stores data as flexible, schema-less documents, enabling rapid iteration and scalability in modern applications.

Embedding (Vector Embedding)

A numerical representation of data — text, images, or other content — as a fixed-length vector of floating-point numbers. Embeddings capture statistical patterns in data that often correspond to semantic relationships, forming the foundation of vector search and AI-powered retrieval systems.

Event Sourcing

An architectural pattern where state changes are recorded as an immutable sequence of events rather than overwriting current state. The current state can be reconstructed by replaying the event log, enabling auditability and temporal queries.

Failover

The process of switching database operations to a standby node when the primary fails, used to maintain service continuity. Can be automatic (cluster-triggered) or manual (operator-initiated). See also Automatic Failover, Switchover.

Geo-Replication

Replication of data across multiple geographic regions to reduce latency for global users and improve disaster recovery capabilities.

Graph Database

A database designed to model and query complex relationships using nodes and edges, often used in recommendation engines and network analysis.

Health Check

A monitoring mechanism that continuously verifies the availability and responsiveness of database nodes to support automated recovery actions.

Heartbeat Monitoring

A periodic signaling mechanism between nodes used to detect whether a system or component is still operational. Missed heartbeats indicate a potential failure, which may trigger failover, recovery processes, or simply signal that a node is unavailable.

High Availability (HA)

A system design approach that minimizes downtime through redundancy, replication, and automated failover, used to ensure reliability in production environments.

Horizontal Scaling

Increasing system capacity by adding more nodes or instances, enabling distributed workloads and improved fault tolerance.

Hot Partition

A condition where uneven workload distribution causes a single partition to receive disproportionate traffic, leading to performance bottlenecks.

Hot Standby

A continuously updated replica that is ready to take over with minimal delay in case of primary node failure.

Index

A data structure that accelerates query performance by enabling efficient lookup of records based on search conditions.

In-Memory Database

A database that stores data primarily in RAM to achieve extremely low latency and high throughput for performance-critical workloads.

IOPS (Input/Output Operations Per Second)

A key storage performance metric that measures the number of read and write operations a system can handle per second, used to size disk infrastructure for I/O-intensive workloads.

Latency

The time delay between initiating a request and receiving a response, commonly used to measure system responsiveness and user experience.

Load Balancing

The distribution of database traffic across multiple nodes, used to optimize performance and prevent resource saturation under varying workloads.

Logical Replication

A replication method that transfers data changes at a logical (row) level rather than the storage level, enabling selective replication of specific tables or cross-version replication between database instances.

Managed Database

A database service where the provider handles provisioning, clustering, patching, backups, failover, and upgrades. Teams interact with the database itself rather than the infrastructure running it.

Materialized View

A stored result of a query that is periodically refreshed to improve performance for complex or frequently executed reads.

Multi-Cloud Deployment

A strategy that uses multiple cloud providers or regions to improve resilience, availability, and avoid vendor lock-in.

Network Partition

A failure condition where a cluster is split into isolated segments that cannot communicate, potentially causing nodes to continue operating independently. Proper partition-handling configuration (e.g., pause_minority in RabbitMQ) prevents split-brain scenarios.

Normalization

The process of structuring data in a relational database to minimize redundancy and improve data integrity, typically by organizing data into related tables.

NoSQL

A class of databases that use non-relational data models and typically do not enforce relationships or constraints at the database level. While relationships may still exist in the data model, they are managed by the application rather than the database, enabling greater flexibility and scalability at the cost of stricter consistency guarantees.

Observability

The ability to monitor, trace, and analyze database behavior using metrics, logs, and traces, used to detect issues early and maintain system reliability at scale.

Partitioning

Dividing a large table or dataset into smaller, independently managed segments based on a defined key (e.g., date range, tenant ID), used to improve query performance and manageability as data volume grows.

Physical Replication

A replication method that copies data at the storage block level, producing an exact byte-for-byte replica of the primary. Used when full database duplication and high-throughput replication are required.

Primary Node

The database or broker instance responsible for handling all write operations in a replicated architecture. In the event of failure, a standby node is promoted to take over the primary role.

Query Optimizer

The component that determines the most efficient execution plan for queries based on data distribution, table statistics, and available indexes.

RAG (Retrieval-Augmented Generation)

An AI architecture pattern where a language model’s responses are grounded by retrieving relevant context from a database at query time, rather than relying solely on training data. Commonly implemented using vector search against an embedding store.

Raft Consensus

A distributed consensus algorithm used to coordinate agreement across cluster nodes, ensuring a majority (quorum) of replicas agree on the order of operations. It is used by systems such as RabbitMQ quorum queues and modern distributed databases to enable predictable leader election and failover.

Read Replica

A copy of the primary database used to offload read traffic, improving performance in read-heavy workloads and reducing load on the primary node. Typically updated via asynchronous replication.

Recovery Point Objective (RPO)

The maximum acceptable amount of data loss measured in time after a failure event, used to determine backup frequency and replication requirements.

Recovery Time Objective (RTO)

The maximum acceptable duration to restore database services after a disruption, used to determine failover strategy and infrastructure investment.

Replication

The process of copying and synchronizing data across multiple nodes, used to improve availability, fault tolerance, and read scalability in production systems.

Replication Lag

The delay between data being written to the primary node and becoming visible on replicas. In asynchronous replication, lag can cause stale reads and impacts RPO during failure events.

Row-Oriented Storage

A storage format where complete rows are stored together, optimized for transactional workloads with frequent writes and point lookups.

Database Schema Design

The structure that defines tables, columns, relationships, constraints, and data types in a database. Schema design decisions shape query performance, application flexibility, and migration complexity throughout a system’s lifetime.

Scaling (Vertical/Horizontal)

Strategies for increasing database capacity either by upgrading resources on a single node (vertical) or distributing load across multiple nodes (horizontal). Horizontal scaling via sharding or clustering is required when a single node reaches its ceiling.

Semi-Synchronous Replication

A replication model where at least one replica confirms it has received and written the data before a transaction is committed, balancing durability and performance. Stronger than async replication; less latency-impactful than fully synchronous.

Service-Level Agreement (SLA)

A formal contract defining uptime guarantees, performance targets, and support expectations for database services. Typically expressed as a percentage of annual availability (e.g., 99.99%).

Sharding

Distributing data horizontally across multiple independent database instances (shards) based on a shard key. Used when a single node’s storage or throughput capacity is insufficient, at the cost of increased architectural complexity.

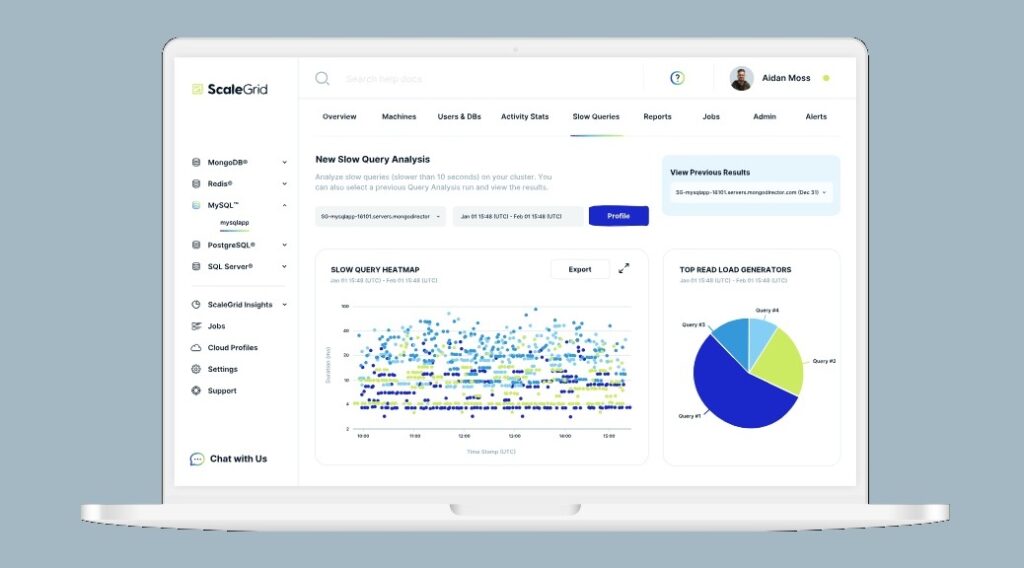

Slow Query

A query that exceeds expected execution time due to inefficient query plans, missing indexes, table bloat, or large data scans. Slow queries are identified via query logs and monitoring tools and are a primary target for performance optimization.

Split-Brain

A failure condition in a distributed cluster where a network partition causes multiple nodes to independently assume the primary role, each accepting writes. Results in data divergence that must be resolved on partition recovery. Mitigated by quorum-based designs and proper partition-handling configuration.

Standby Node

A replica that continuously receives replication data from the primary and is ready to be promoted in the event of a primary failure. May serve read traffic (hot standby) or remain idle until needed.

Streaming Replication

The continuous replication of database changes, often via transaction logs, to one or more replicas in near real-time, typically used for high availability and read scaling.

Switchover

A planned, operator-initiated role transition where a standby node is promoted to primary in a controlled manner. While designed to minimize disruption, some operations may be briefly interrupted during the transition. It contrasts with failover, which is triggered by an unplanned failure.

Synchronous Replication

A replication method where the primary waits for at least one replica to confirm receipt of a write before acknowledging the transaction to the client. Provides the strongest durability guarantee at the cost of added write latency.

Throughput

The number of database operations processed per unit of time, used to evaluate system capacity and performance under sustained load. Often measured in transactions per second (TPS) or messages per second (msg/s).

Transaction (ACID vs BASE Context)

A sequence of operations executed as a single unit of work. In ACID systems, transactions guarantee atomicity and isolation even under failure. In BASE systems, operations may succeed partially, with consistency achieved eventually. The right model depends on workload requirements.

Vector Search

A query technique that finds records most semantically similar to a given input by comparing high-dimensional vector embeddings using distance metrics (e.g., cosine similarity, L2 distance). Used in AI-powered applications for semantic search, recommendation, and RAG pipelines.

Vertical Scaling

Increasing database capacity by adding more CPU, memory, or storage resources to a single node. Simpler than horizontal scaling but bounded by the maximum available hardware configuration.

Messaging / Queueing

AMQP (Advanced Message Queuing Protocol)

A standardized wire protocol that defines how messages are structured, routed, and transmitted between distributed systems. The foundational protocol of RabbitMQ.

At-Least-Once Delivery

A message delivery guarantee where each message is delivered one or more times. If a consumer fails before acknowledging a message, it may be redelivered, requiring the application to handle potential duplicates.

Dead Letter Queue (DLQ)

A queue that captures messages that could not be successfully delivered or processed — those that exceeded retry limits, expired via TTL, or were explicitly rejected. Provides a durable audit trail for failed messages to inspect and reprocess.

Exchange (RabbitMQ)

A routing component that receives messages from producers and directs them to queues based on exchange type and binding rules. Types include direct (exact key match), topic (wildcard routing), fanout (broadcast to all bound queues), and headers.

Fanout

A message routing pattern where every message published to an exchange is delivered to all bound queues simultaneously, regardless of routing key. Used for event broadcasting where all subscribers need every event — audit logging, cache invalidation, notifications.

Flow Control

A mechanism used by systems such as RabbitMQ to regulate data flow when resource limits like memory or disk are reached. When thresholds are exceeded, publishers are temporarily blocked or slowed to prevent resource exhaustion and maintain system stability.

Message Acknowledgment (Ack/Nack)

A mechanism by which a consumer signals to the broker whether a message was successfully processed (ack) or should be requeued or dead-lettered (nack). Unacknowledged messages are redelivered on consumer disconnect, enabling at-least-once delivery.

Message Broker

A system that enables asynchronous communication between services by handling message routing, buffering, and delivery. Decouples producers from consumers so failures in one service do not cascade to others.

Message Persistence

A delivery_mode=2 configuration in RabbitMQ that ensures messages are written to disk before the broker acknowledges receipt. Without persistence, messages stored in memory are lost on broker restart — a common production misconfiguration.

Prefetch Count

A consumer-side flow control setting (basic_qos) that limits how many unacknowledged messages the broker delivers to a single consumer at once. A value of 10–50 is a common starting point; too high risks large requeue batches on crash, too low causes consumer idle time.

Publisher Confirms

A RabbitMQ reliability mechanism in which the broker sends an acknowledgment to the producer after a message has been accepted and persisted to disk. Without publisher confirms, message loss during broker restart is possible and often not detected by the application.

Publisher / Consumer

The two roles in a messaging system publishers (producers) emit messages to an exchange consumers subscribe to queues and process messages. The two sides are decoupled — producers do not address consumers directly.

Queue

A durable or transient buffer that stores messages between producer and consumer. In RabbitMQ, queue type (classic, quorum, stream) determines replication behavior, durability guarantees, and failover characteristics.

Quorum Queue

A RabbitMQ queue type that uses Raft-based consensus to replicate messages across a configurable set of nodes (typically 3 or 5). Writes are acknowledged only after a quorum confirms receipt, providing strong durability guarantees and automatic leader election on failure. The recommended queue type for new production workloads.

Routing Key

A string value attached to a message that exchanges use to determine which queues receive it. In direct exchanges, routing keys must match exactly; in topic exchanges, they support wildcard patterns using * and #.

Stream Queue

A RabbitMQ queue type backed by an append-only replicated log, where messages are retained and can be read from any offset. Messages are not removed upon acknowledgment but are instead managed based on retention policies, enabling replay for use cases such as audit trails, event sourcing, and high-throughput streaming workloads.

Distributed Data & PostgreSQL

Coordinator Node

In a Citus distributed PostgreSQL cluster, the node that receives queries from clients, determines the execution plan, and distributes work to worker nodes. The coordinator does not store distributed table data itself.

Distributed Table

In distributed database systems such as PostgreSQL with Citus, a table whose rows are spread across multiple worker nodes according to a distribution column. Queries can execute in parallel across shards, improving throughput for large datasets.

Shard Key (Distribution Column)

The column or attribute used to determine how rows are distributed across shards or worker nodes in a distributed database. Shard key selection determines write locality, query efficiency, and the likelihood of hot partitions.

Time-Series Data

Data where each record is associated with a timestamp and records are written sequentially over time. Time-series workloads are characterized by high write volume, time-range queries, and retention policies. Common in IoT, monitoring, and financial systems.

Worker Node

In a Citus distributed PostgreSQL cluster, a node that stores shards of distributed tables and executes query fragments assigned by the coordinator. Adding worker nodes increases storage capacity and query parallelism.

DevOps & Site Reliability

Configuration Management

Maintaining consistent database configurations across environments to reduce drift and operational risk.

Continuous Delivery (CD)

A process that ensures database changes are consistently deployable through automated validation and release workflows.

Continuous Deployment

A fully automated release approach where validated database changes are deployed to production without manual intervention.

Continuous Integration (CI)

A practice where database changes are frequently integrated, tested, and validated through automated pipelines.

Deployment Pipeline

An automated workflow that manages the progression of database changes from development through testing to production.

Infrastructure as Code (IaC)

Managing database infrastructure using declarative code to enable consistent, repeatable, and version-controlled provisioning across environments.

Shift-Left Security

Integrating database security checks early in development workflows to enforce best practices and reduce risk before reaching production.

Shift-Left Testing

Validating database changes early in the development lifecycle to identify issues before production deployment.

SRE (Site Reliability Engineering)

An engineering discipline that applies software development principles to operations, using automation, SLOs, and error budgets to manage database reliability at scale.

Version Control for Schema

Tracking and managing database schema changes using version control systems (e.g., Flyway, Liquibase) to ensure traceability, rollback capability, and team coordination on migrations.

Managing databases at scale involves more than understanding individual concepts—it requires handling replication, failover, scaling, and performance as part of a cohesive system.

ScaleGrid helps teams simplify these operational challenges by automating database management across PostgreSQL, MySQL, MongoDB, Redis, and RabbitMQ deployments in the cloud.

If you’re evaluating how to improve reliability, performance, or scalability in your data infrastructure, explore how ScaleGrid approaches database operations in production environments.